redis cluster что это

Установка и настройка кластера Redis в Linux

Кластер Redis часто используется в качестве инструмента для хранения данных их кэширования, брокера сообщений и других задач. Он стал популярным инструментом благодаря возможности масштабирования и высокой скорости работы. В этой статье представлены инструкции по созданию кластера на трех серверах для организации разделения данных (sharding) и высокой доступности за счет репликации. В данной конфигурации в случае отказа узла master, slave-сервер автоматически заменяет его.

Данная статья является переводом и адаптацией англоязычной статьи.

Redis как хранилище, размещаемое в памяти, обеспечивает высокоскоростную обработку таких операций, как подсчет, кэширование, организация очередей и др. Установка кластера значительно увеличивает надежность Redis за счет устранения единой точки отказа.

Установка Redis на каждый сервер

В зависимости от используемой версии Linux, может быть доступна установка Redis через менеджер пакетов. В данном руководстве мы рассмотрим установку текущей стабильной версии из исходного кода.

Сначала установите зависимости необходимые для сборки зависимости:

Скачайте текущую стабильную ветку и извлеките исходный код из архива:

Убедитесь, что тесты сборки проходят успешно:

При успешном завершении, в консоли будет ответ:

Необходимо повторить установку для каждого сервера, который будет входить в кластер.

Настройка узлов Master и Slave

В данной инструкции каждый master будет подключен к одному slave.

Для более удобной работы с несколькими терминалами рекомендуем использовать tmux.

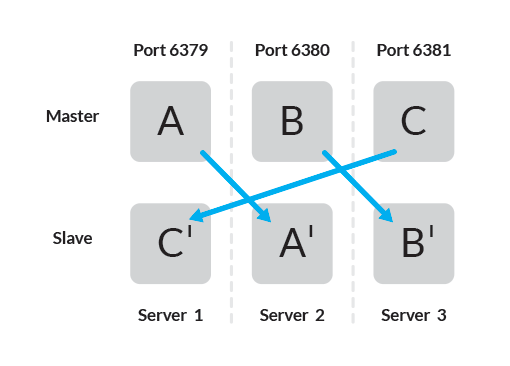

Официальная документация рекомендует использовать 6 узлов — по одному экземпляру Redis на узле, что позволяет обеспечить большую надежность, но возможно использовать три узла со следующей топологией соединений:

В установке используется три сервера, на каждом из которых запущено по два экземпляра Redis. Убедитесь, что каждый хост независим от других и не выйдет из строя совместно с другим. Далее выполните следующие шаги:

Для каждого узла в проектируемом кластере Redis требуется доступность не только определенного порта, но и порта выше 10000. На сервере 1 оба порта TCP 6379 и 16379 должны быть открыты. Убедитесь, что файрвол настроен корректно.

Повторите процедуру для оставшихся двух серверов, определив порты для всех пар master-slave.

| Server | Master | Slave |

| 1 | 6379 | 6381 |

| 2 | 6380 | 6379 |

| 3 | 6381 | 6380 |

Запуск узлов Master и Slave

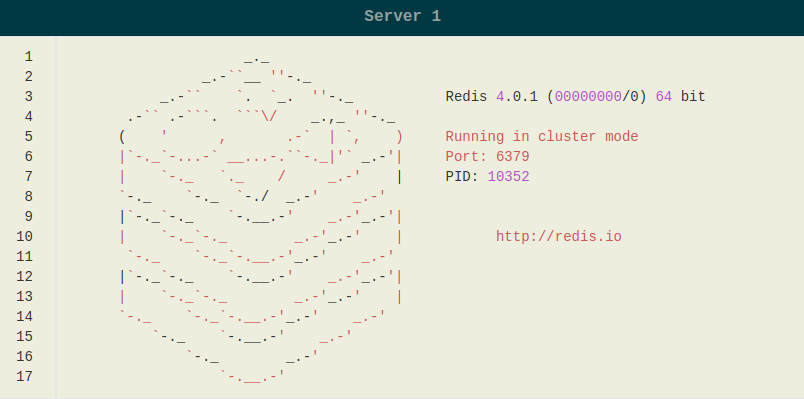

Подключитесь по SSH к серверу 1 и запустите оба экземпляра Redis:

Для других двух серверов замените a_master.conf и c_slave.conf соответствующим конфигурационным файлом. Все узлы master будут запущены в режиме кластера.

Создание кластера с использованием встроенного скрипта Ruby

На этом этапе на каждом сервере запущены по два независимых узла master. Дальнейшая установка кластера происходит с помощью скрипта Ruby, который хранится в

Если Ruby еще не установлен, установите его:

Установите пакет Redis для Ruby:

Чтобы запустить скрипт, перейдите в каталог, где находится исходный код Redis и выполните настройку серверов кластера, передав список пар ip:port серверов, которые будут играть роль master:

При успешной установке кластера вернется ответ:

Команды Redis не чувствительны к регистру. Однако для ясности, в данной инструкции мы их пишем заглавными буквами.

На данном этапе в кластере только 3 master-сервера, данные будут распределяться по кластеру, но не реплицироваться. Присоединим к каждому серверу master один сервер slave, чтобы обеспечить репликацию данных.

Добавление узлов Slave

В результате должен вернуться ответ:

Повторите это действие для двух оставшихся узлов:

Распределение данных

Интерфейс командной строки Redis позволяет задать и просмотреть ключи с помощью SET и GET и других команд. Вы можете присоединиться к любому из узлов master и получить свойства кластера Redis.

Используйте команду CLUSTER INFO для просмотра информации о состоянии кластера, например, его размер, хэш слоты, ошибки, если они есть.

Для проверки распределения данных можно установить несколько пар ключ-значение.

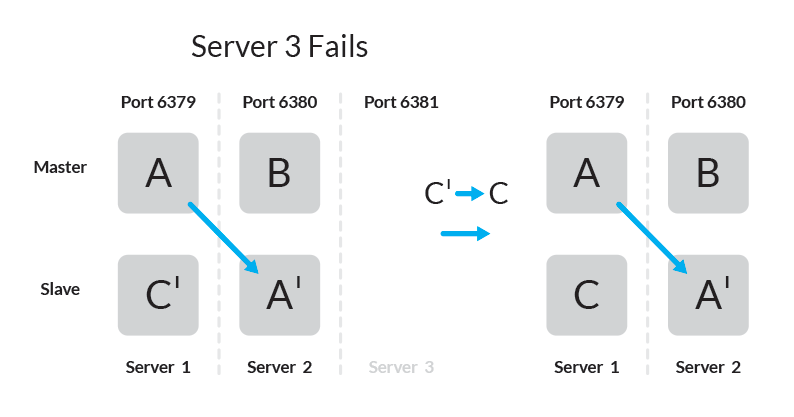

Поведение Slave при отказе Master

При использовании данной топологии, при отказе одного из серверов кластер будет полностью работоспособен — узел оставшийся без master-а узел slave станет master-ом.

Для проверки добавим пару ключ-значение:

Ключ foo добавлен в master на сервере 3 и скопирован в slave на сервере 1.

В случае, если сервер 3 станет недоступен, slave на сервере 1 станет master и кластер останется доступным.

Для ключа, который был в хэш слоте на сервере 3, пара ключ-значение теперь хранится на сервере 1.

Дополнительная функциональность, например, добавление узлов, создание нескольких узлов slave, или resharding данных не описана в данном руководстве. Подробнее о том, как использовать эти возможности, читайте в официальной документации Redis.

Дополнительные ресурсы

Возможно, вам будут полезны ссылки ниже для получения более подробной информации по теме:

*Redis cluster tutorial

This document is a gentle introduction to Redis Cluster, that does not use difficult to understand concepts of distributed systems. It provides instructions about how to setup a cluster, test, and operate it, without going into the details that are covered in the Redis Cluster specification but just describing how the system behaves from the point of view of the user.

However this tutorial tries to provide information about the availability and consistency characteristics of Redis Cluster from the point of view of the final user, stated in a simple to understand way.

Note this tutorial requires Redis version 3.0 or higher.

If you plan to run a serious Redis Cluster deployment, the more formal specification is a suggested reading, even if not strictly required. However it is a good idea to start from this document, play with Redis Cluster some time, and only later read the specification.

*Redis Cluster 101

Redis Cluster provides a way to run a Redis installation where data is automatically sharded across multiple Redis nodes.

Redis Cluster also provides some degree of availability during partitions, that is in practical terms the ability to continue the operations when some nodes fail or are not able to communicate. However the cluster stops to operate in the event of larger failures (for example when the majority of masters are unavailable).

So in practical terms, what do you get with Redis Cluster?

*Redis Cluster TCP ports

Every Redis Cluster node requires two TCP connections open. The normal Redis TCP port used to serve clients, for example 6379, plus the second port named cluster bus port. The cluster bus port will be derived by adding 10000 to the data port, 16379 in this example, or by overiding it with the cluster-port config.

This second high port is used for the Cluster bus, that is a node-to-node communication channel using a binary protocol. The Cluster bus is used by nodes for failure detection, configuration update, failover authorization and so forth. Clients should never try to communicate with the cluster bus port, but always with the normal Redis command port, however make sure you open both ports in your firewall, otherwise Redis cluster nodes will be not able to communicate.

Note that for a Redis Cluster to work properly you need, for each node:

If you don’t open both TCP ports, your cluster will not work as expected.

The cluster bus uses a different, binary protocol, for node to node data exchange, which is more suited to exchange information between nodes using little bandwidth and processing time.

*Redis Cluster and Docker

Currently Redis Cluster does not support NATted environments and in general environments where IP addresses or TCP ports are remapped.

Docker uses a technique called port mapping: programs running inside Docker containers may be exposed with a different port compared to the one the program believes to be using. This is useful in order to run multiple containers using the same ports, at the same time, in the same server.

*Redis Cluster data sharding

Redis Cluster does not use consistent hashing, but a different form of sharding where every key is conceptually part of what we call a hash slot.

There are 16384 hash slots in Redis Cluster, and to compute what is the hash slot of a given key, we simply take the CRC16 of the key modulo 16384.

Every node in a Redis Cluster is responsible for a subset of the hash slots, so for example you may have a cluster with 3 nodes, where:

This allows to add and remove nodes in the cluster easily. For example if I want to add a new node D, I need to move some hash slot from nodes A, B, C to D. Similarly if I want to remove node A from the cluster I can just move the hash slots served by A to B and C. When the node A will be empty I can remove it from the cluster completely.

Because moving hash slots from a node to another does not require to stop operations, adding and removing nodes, or changing the percentage of hash slots hold by nodes, does not require any downtime.

Redis Cluster supports multiple key operations as long as all the keys involved into a single command execution (or whole transaction, or Lua script execution) all belong to the same hash slot. The user can force multiple keys to be part of the same hash slot by using a concept called hash tags.

Hash tags are documented in the Redis Cluster specification, but the gist is that if there is a substring between <> brackets in a key, only what is inside the string is hashed, so for example this

*Redis Cluster master-replica model

In order to remain available when a subset of master nodes are failing or are not able to communicate with the majority of nodes, Redis Cluster uses a master-replica model where every hash slot has from 1 (the master itself) to N replicas (N-1 additional replica nodes).

In our example cluster with nodes A, B, C, if node B fails the cluster is not able to continue, since we no longer have a way to serve hash slots in the range 5501-11000.

However when the cluster is created (or at a later time) we add a replica node to every master, so that the final cluster is composed of A, B, C that are master nodes, and A1, B1, C1 that are replica nodes. This way, the system is able to continue if node B fails.

Node B1 replicates B, and B fails, the cluster will promote node B1 as the new master and will continue to operate correctly.

However, note that if nodes B and B1 fail at the same time, Redis Cluster is not able to continue to operate.

*Redis Cluster consistency guarantees

Redis Cluster is not able to guarantee strong consistency. In practical terms this means that under certain conditions it is possible that Redis Cluster will lose writes that were acknowledged by the system to the client.

The first reason why Redis Cluster can lose writes is because it uses asynchronous replication. This means that during writes the following happens:

As you can see, B does not wait for an acknowledgement from B1, B2, B3 before replying to the client, since this would be a prohibitive latency penalty for Redis, so if your client writes something, B acknowledges the write, but crashes before being able to send the write to its replicas, one of the replicas (that did not receive the write) can be promoted to master, losing the write forever.

This is very similar to what happens with most databases that are configured to flush data to disk every second, so it is a scenario you are already able to reason about because of past experiences with traditional database systems not involving distributed systems. Similarly you can improve consistency by forcing the database to flush data to disk before replying to the client, but this usually results in prohibitively low performance. That would be the equivalent of synchronous replication in the case of Redis Cluster.

Basically, there is a trade-off to be made between performance and consistency.

Redis Cluster has support for synchronous writes when absolutely needed, implemented via the WAIT command. This makes losing writes a lot less likely. However, note that Redis Cluster does not implement strong consistency even when synchronous replication is used: it is always possible, under more complex failure scenarios, that a replica that was not able to receive the write will be elected as master.

There is another notable scenario where Redis Cluster will lose writes, that happens during a network partition where a client is isolated with a minority of instances including at least a master.

Take as an example our 6 nodes cluster composed of A, B, C, A1, B1, C1, with 3 masters and 3 replicas. There is also a client, that we will call Z1.

After a partition occurs, it is possible that in one side of the partition we have A, C, A1, B1, C1, and in the other side we have B and Z1.

Z1 is still able to write to B, which will accept its writes. If the partition heals in a very short time, the cluster will continue normally. However, if the partition lasts enough time for B1 to be promoted to master on the majority side of the partition, the writes that Z1 has sent to B in the mean time will be lost.

Note that there is a maximum window to the amount of writes Z1 will be able to send to B: if enough time has elapsed for the majority side of the partition to elect a replica as master, every master node in the minority side will have stopped accepting writes.

This amount of time is a very important configuration directive of Redis Cluster, and is called the node timeout.

After node timeout has elapsed, a master node is considered to be failing, and can be replaced by one of its replicas. Similarly, after node timeout has elapsed without a master node to be able to sense the majority of the other master nodes, it enters an error state and stops accepting writes.

*Redis Cluster configuration parameters

We are about to create an example cluster deployment. Before we continue, let’s introduce the configuration parameters that Redis Cluster introduces in the redis.conf file. Some will be obvious, others will be more clear as you continue reading.

*Creating and using a Redis Cluster

Note: to deploy a Redis Cluster manually it is very important to learn certain operational aspects of it. However if you want to get a cluster up and running ASAP (As Soon As Possible) skip this section and the next one and go directly to Creating a Redis Cluster using the create-cluster script.

To create a cluster, the first thing we need is to have a few empty Redis instances running in cluster mode. This basically means that clusters are not created using normal Redis instances as a special mode needs to be configured so that the Redis instance will enable the Cluster specific features and commands.

The following is a minimal Redis cluster configuration file:

Note that the minimal cluster that works as expected requires to contain at least three master nodes. For your first tests it is strongly suggested to start a six nodes cluster with three masters and three replicas.

To do so, enter a new directory, and create the following directories named after the port number of the instance we’ll run inside any given directory.

Create a redis.conf file inside each of the directories, from 7000 to 7005. As a template for your configuration file just use the small example above, but make sure to replace the port number 7000 with the right port number according to the directory name.

Now copy your redis-server executable, compiled from the latest sources in the unstable branch at GitHub, into the cluster-test directory, and finally open 6 terminal tabs in your favorite terminal application.

Start every instance like that, one every tab:

As you can see from the logs of every instance, since no nodes.conf file existed, every node assigns itself a new ID.

This ID will be used forever by this specific instance in order for the instance to have a unique name in the context of the cluster. Every node remembers every other node using this IDs, and not by IP or port. IP addresses and ports may change, but the unique node identifier will never change for all the life of the node. We call this identifier simply Node ID.

*Creating the cluster

Now that we have a number of instances running, we need to create our cluster by writing some meaningful configuration to the nodes.

To create your cluster for Redis 5 with redis-cli simply type:

Using redis-trib.rb for Redis 4 or 3 type:

Obviously the only setup with our requirements is to create a cluster with 3 masters and 3 replicas.

Redis-cli will propose you a configuration. Accept the proposed configuration by typing yes. The cluster will be configured and joined, which means, instances will be bootstrapped into talking with each other. Finally, if everything went well, you’ll see a message like that:

This means that there is at least a master instance serving each of the 16384 slots available.

*Creating a Redis Cluster using the create-cluster script

If you don’t want to create a Redis Cluster by configuring and executing individual instances manually as explained above, there is a much simpler system (but you’ll not learn the same amount of operational details).

Just check utils/create-cluster directory in the Redis distribution. There is a script called create-cluster inside (same name as the directory it is contained into), it’s a simple bash script. In order to start a 6 nodes cluster with 3 masters and 3 replicas just type the following commands:

Reply to yes in step 2 when the redis-cli utility wants you to accept the cluster layout.

You can now interact with the cluster, the first node will start at port 30001 by default. When you are done, stop the cluster with:

Please read the README inside this directory for more information on how to run the script.

*Playing with the cluster

At this stage one of the problems with Redis Cluster is the lack of client libraries implementations.

I’m aware of the following implementations:

An easy way to test Redis Cluster is either to try any of the above clients or simply the redis-cli command line utility. The following is an example of interaction using the latter:

Note: if you created the cluster using the script your nodes may listen to different ports, starting from 30001 by default.

The redis-cli cluster support is very basic so it always uses the fact that Redis Cluster nodes are able to redirect a client to the right node. A serious client is able to do better than that, and cache the map between hash slots and nodes addresses, to directly use the right connection to the right node. The map is refreshed only when something changed in the cluster configuration, for example after a failover or after the system administrator changed the cluster layout by adding or removing nodes.

*Writing an example app with redis-rb-cluster

Before going forward showing how to operate the Redis Cluster, doing things like a failover, or a resharding, we need to create some example application or at least to be able to understand the semantics of a simple Redis Cluster client interaction.

In this way we can run an example and at the same time try to make nodes failing, or start a resharding, to see how Redis Cluster behaves under real world conditions. It is not very helpful to see what happens while nobody is writing to the cluster.

This section explains some basic usage of redis-rb-cluster showing two examples. The first is the following, and is the example.rb file inside the redis-rb-cluster distribution:

The program looks more complex than it should usually as it is designed to show errors on the screen instead of exiting with an exception, so every operation performed with the cluster is wrapped by begin rescue blocks.

The line 14 is the first interesting line in the program. It creates the Redis Cluster object, using as argument a list of startup nodes, the maximum number of connections this object is allowed to take against different nodes, and finally the timeout after a given operation is considered to be failed.

The startup nodes don’t need to be all the nodes of the cluster. The important thing is that at least one node is reachable. Also note that redis-rb-cluster updates this list of startup nodes as soon as it is able to connect with the first node. You should expect such a behavior with any other serious client.

Now that we have the Redis Cluster object instance stored in the rc variable we are ready to use the object like if it was a normal Redis object instance.

However note how it is a while loop, as we want to try again and again even if the cluster is down and is returning errors. Normal applications don’t need to be so careful.

Lines between 28 and 37 start the main loop where the keys are set or an error is displayed.

Note the sleep call at the end of the loop. In your tests you can remove the sleep if you want to write to the cluster as fast as possible (relatively to the fact that this is a busy loop without real parallelism of course, so you’ll get the usually 10k ops/second in the best of the conditions).

Normally writes are slowed down in order for the example application to be easier to follow by humans.

Starting the application produces the following output:

This is not a very interesting program and we’ll use a better one in a moment but we can already see what happens during a resharding when the program is running.

*Resharding the cluster

Now we are ready to try a cluster resharding. To do this please keep the example.rb program running, so that you can see if there is some impact on the program running. Also you may want to comment the sleep call in order to have some more serious write load during resharding.

Resharding basically means to move hash slots from a set of nodes to another set of nodes, and like cluster creation it is accomplished using the redis-cli utility.

To start a resharding just type:

You only need to specify a single node, redis-cli will find the other nodes automatically.

Currently redis-cli is only able to reshard with the administrator support, you can’t just say move 5% of slots from this node to the other one (but this is pretty trivial to implement). So it starts with questions. The first is how much a big resharding do you want to do:

We can try to reshard 1000 hash slots, that should already contain a non trivial amount of keys if the example is still running without the sleep call.

Then redis-cli needs to know what is the target of the resharding, that is, the node that will receive the hash slots. I’ll use the first master node, that is, 127.0.0.1:7000, but I need to specify the Node ID of the instance. This was already printed in a list by redis-cli, but I can always find the ID of a node with the following command if I need:

Ok so my target node is 97a3a64667477371c4479320d683e4c8db5858b1.

Now you’ll get asked from what nodes you want to take those keys. I’ll just type all in order to take a bit of hash slots from all the other master nodes.

After the final confirmation you’ll see a message for every slot that redis-cli is going to move from a node to another, and a dot will be printed for every actual key moved from one side to the other.

While the resharding is in progress you should be able to see your example program running unaffected. You can stop and restart it multiple times during the resharding if you want.

At the end of the resharding, you can test the health of the cluster with the following command:

All the slots will be covered as usual, but this time the master at 127.0.0.1:7000 will have more hash slots, something around 6461.

*Scripting a resharding operation

Resharding can be performed automatically without the need to manually enter the parameters in an interactive way. This is possible using a command line like the following:

This allows to build some automatism if you are likely to reshard often, however currently there is no way for redis-cli to automatically rebalance the cluster checking the distribution of keys across the cluster nodes and intelligently moving slots as needed. This feature will be added in the future.

*A more interesting example application

The example application we wrote early is not very good. It writes to the cluster in a simple way without even checking if what was written is the right thing.

From our point of view the cluster receiving the writes could just always write the key foo to 42 to every operation, and we would not notice at all.

However instead of just writing, the application does two additional things:

What this means is that this application is a simple consistency checker, and is able to tell you if the cluster lost some write, or if it accepted a write that we did not receive acknowledgment for. In the first case we’ll see a counter having a value that is smaller than the one we remember, while in the second case the value will be greater.

Running the consistency-test application produces a line of output every second:

The line shows the number of Reads and Writes performed, and the number of errors (query not accepted because of errors since the system was not available).

If some inconsistency is found, new lines are added to the output. This is what happens, for example, if I reset a counter manually while the program is running:

When I set the counter to 0 the real value was 114, so the program reports 114 lost writes (INCR commands that are not remembered by the cluster).

This program is much more interesting as a test case, so we’ll use it to test the Redis Cluster failover.

*Testing the failover

Note: during this test, you should take a tab open with the consistency test application running.

In order to trigger the failover, the simplest thing we can do (that is also the semantically simplest failure that can occur in a distributed system) is to crash a single process, in our case a single master.

We can identify a master and crash it with the following command:

Ok, so 7000, 7001, and 7002 are masters. Let’s crash node 7002 with the DEBUG SEGFAULT command:

Now we can look at the output of the consistency test to see what it reported.

As you can see during the failover the system was not able to accept 578 reads and 577 writes, however no inconsistency was created in the database. This may sound unexpected as in the first part of this tutorial we stated that Redis Cluster can lose writes during the failover because it uses asynchronous replication. What we did not say is that this is not very likely to happen because Redis sends the reply to the client, and the commands to replicate to the replicas, about at the same time, so there is a very small window to lose data. However the fact that it is hard to trigger does not mean that it is impossible, so this does not change the consistency guarantees provided by Redis cluster.

We can now check what is the cluster setup after the failover (note that in the meantime I restarted the crashed instance so that it rejoins the cluster as a replica):

Now the masters are running on ports 7000, 7001 and 7005. What was previously a master, that is the Redis instance running on port 7002, is now a replica of 7005.

The output of the CLUSTER NODES command may look intimidating, but it is actually pretty simple, and is composed of the following tokens:

*Manual failover

Sometimes it is useful to force a failover without actually causing any problem on a master. For example in order to upgrade the Redis process of one of the master nodes it is a good idea to failover it in order to turn it into a replica with minimal impact on availability.

Manual failovers are supported by Redis Cluster using the CLUSTER FAILOVER command, that must be executed in one of the replicas of the master you want to failover.

Manual failovers are special and are safer compared to failovers resulting from actual master failures, since they occur in a way that avoid data loss in the process, by switching clients from the original master to the new master only when the system is sure that the new master processed all the replication stream from the old one.

This is what you see in the replica log when you perform a manual failover:

Basically clients connected to the master we are failing over are stopped. At the same time the master sends its replication offset to the replica, that waits to reach the offset on its side. When the replication offset is reached, the failover starts, and the old master is informed about the configuration switch. When the clients are unblocked on the old master, they are redirected to the new master.

*Adding a new node

Adding a new node is basically the process of adding an empty node and then moving some data into it, in case it is a new master, or telling it to setup as a replica of a known node, in case it is a replica.

We’ll show both, starting with the addition of a new master instance.

In both cases the first step to perform is adding an empty node.

This is as simple as to start a new node in port 7006 (we already used from 7000 to 7005 for our existing 6 nodes) with the same configuration used for the other nodes, except for the port number, so what you should do in order to conform with the setup we used for the previous nodes:

At this point the server should be running.

Now we can use redis-cli as usual in order to add the node to the existing cluster.

As you can see I used the add-node command specifying the address of the new node as first argument, and the address of a random existing node in the cluster as second argument.

In practical terms redis-cli here did very little to help us, it just sent a CLUSTER MEET message to the node, something that is also possible to accomplish manually. However redis-cli also checks the state of the cluster before to operate, so it is a good idea to perform cluster operations always via redis-cli even when you know how the internals work.

Now we can connect to the new node to see if it really joined the cluster:

Note that since this node is already connected to the cluster it is already able to redirect client queries correctly and is generally speaking part of the cluster. However it has two peculiarities compared to the other masters:

*Adding a new node as a replica

Note that the command line here is exactly like the one we used to add a new master, so we are not specifying to which master we want to add the replica. In this case what happens is that redis-cli will add the new node as replica of a random master among the masters with less replicas.

However you can specify exactly what master you want to target with your new replica with the following command line:

This way we assign the new replica to a specific master.

A more manual way to add a replica to a specific master is to add the new node as an empty master, and then turn it into a replica using the CLUSTER REPLICATE command. This also works if the node was added as a replica but you want to move it as a replica of a different master.

For example in order to add a replica for the node 127.0.0.1:7005 that is currently serving hash slots in the range 11423-16383, that has a Node ID 3c3a0c74aae0b56170ccb03a76b60cfe7dc1912e, all I need to do is to connect with the new node (already added as empty master) and send the command:

That’s it. Now we have a new replica for this set of hash slots, and all the other nodes in the cluster already know (after a few seconds needed to update their config). We can verify with the following command:

The node 3c3a0c. now has two replicas, running on ports 7002 (the existing one) and 7006 (the new one).

*Removing a node

To remove a replica node just use the del-node command of redis-cli:

The first argument is just a random node in the cluster, the second argument is the ID of the node you want to remove.

You can remove a master node in the same way as well, however in order to remove a master node it must be empty. If the master is not empty you need to reshard data away from it to all the other master nodes before.

An alternative to remove a master node is to perform a manual failover of it over one of its replicas and remove the node after it turned into a replica of the new master. Obviously this does not help when you want to reduce the actual number of masters in your cluster, in that case, a resharding is needed.

*Replicas migration

In Redis Cluster it is possible to reconfigure a replica to replicate with a different master at any time just using the following command:

However there is a special scenario where you want replicas to move from one master to another one automatically, without the help of the system administrator. The automatic reconfiguration of replicas is called replicas migration and is able to improve the reliability of a Redis Cluster.

Note: you can read the details of replicas migration in the Redis Cluster Specification, here we’ll only provide some information about the general idea and what you should do in order to benefit from it.

The reason why you may want to let your cluster replicas to move from one master to another under certain condition, is that usually the Redis Cluster is as resistant to failures as the number of replicas attached to a given master.

For example a cluster where every master has a single replica can’t continue operations if the master and its replica fail at the same time, simply because there is no other instance to have a copy of the hash slots the master was serving. However while net-splits are likely to isolate a number of nodes at the same time, many other kind of failures, like hardware or software failures local to a single node, are a very notable class of failures that are unlikely to happen at the same time, so it is possible that in your cluster where every master has a replica, the replica is killed at 4am, and the master is killed at 6am. This still will result in a cluster that can no longer operate.

To improve reliability of the system we have the option to add additional replicas to every master, but this is expensive. Replica migration allows to add more replicas to just a few masters. So you have 10 masters with 1 replica each, for a total of 20 instances. However you add, for example, 3 instances more as replicas of some of your masters, so certain masters will have more than a single replica.

With replicas migration what happens is that if a master is left without replicas, a replica from a master that has multiple replicas will migrate to the orphaned master. So after your replica goes down at 4am as in the example we made above, another replica will take its place, and when the master will fail as well at 5am, there is still a replica that can be elected so that the cluster can continue to operate.

So what you should know about replicas migration in short?

*Upgrading nodes in a Redis Cluster

Upgrading replica nodes is easy since you just need to stop the node and restart it with an updated version of Redis. If there are clients scaling reads using replica nodes, they should be able to reconnect to a different replica if a given one is not available.

Upgrading masters is a bit more complex, and the suggested procedure is:

Following this procedure you should upgrade one node after the other until all the nodes are upgraded.

*Migrating to Redis Cluster

Users willing to migrate to Redis Cluster may have just a single master, or may already using a preexisting sharding setup, where keys are split among N nodes, using some in-house algorithm or a sharding algorithm implemented by their client library or Redis proxy.

In both cases it is possible to migrate to Redis Cluster easily, however what is the most important detail is if multiple-keys operations are used by the application, and how. There are three different cases:

The third case is not handled by Redis Cluster: the application requires to be modified in order to don’t use multi keys operations or only use them in the context of the same hash tag.

Case 1 and 2 are covered, so we’ll focus on those two cases, that are handled in the same way, so no distinction will be made in the documentation.

Assuming you have your preexisting data set split into N masters, where N=1 if you have no preexisting sharding, the following steps are needed in order to migrate your data set to Redis Cluster:

The command moves all the keys of a running instance (deleting the keys from the source instance) to the specified pre-existing Redis Cluster. However note that if you use a Redis 2.8 instance as source instance the operation may be slow since 2.8 does not implement migrate connection caching, so you may want to restart your source instance with a Redis 3.x version before to perform such operation.

A note about the word slave used in this page: Starting with Redis 5, if not for backward compatibility, the Redis project no longer uses the word slave. Unfortunately in this command the word slave is part of the protocol, so we’ll be able to remove such occurrences only when this API will be naturally deprecated.

This website is open source software and is sponsored by Redis Ltd. See all credits.